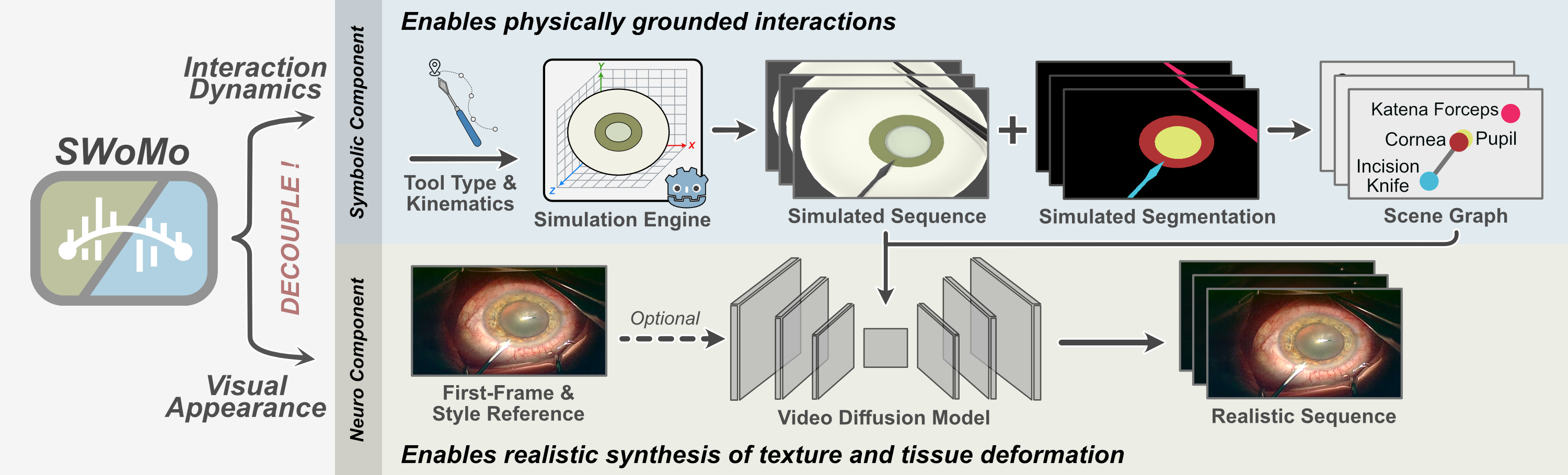

Realistic surgical simulation plays a crucial role in training novice surgeons and in the development of autonomous agents. World models can scale such simulation environments to realistic and diverse procedures by predicting future patient states conditioned on current observations and surgical actions. However, current state-of-the-art approaches often fail to satisfy key criteria required for clinical applicability, including visual realism, physically grounded interactions, and the ability to simulate scenarios beyond the training distribution. Hence, we introduce SWoMo, a neuro-symbolic world model for cataract surgery simulation that decouples motion generation from visual realism. The symbolic component, consisting of a rule-based simulator and scene graph representations, models motion dynamics and tool–tissue interactions, while a diffusion model produces realistic visual appearance, including textures and tissue deformations. We propose an inverse pairing strategy that reconstructs real surgical videos in the simulator to obtain paired simulated and real videos, which are then used to train our video diffusion model for the reverse objective of sim-to-real translation. Our experiments show both qualitative and quantitative improvements over prior work. We demonstrate that our simulator further satisfies the key criteria, including generalisation to unseen interaction geometries, improvements in downstream phase detection, and unsupervised video style transfer.

We utilise the simulated sequence from the source domain with the initial-frame conditioning from the target domain to synthesise videos in the target style.

By introducing an intermediate simulated sequence representation that provides explicit structural and motion guidance, we further push the boundaries of what the model can handle. Using the simulator, we generate difficult cases, including tools with novel entry directions and tool combinations that never co-occur in the training data.

We introduce inverse pairing strategy in which tool and anatomical motions are extracted from real surgical videos and replayed in the simulator, producing large-scale paired simulated and real videos. The simulator also produces corresponding segmentations. We start by segmenting pupil and iris employing nnU-Net, and corresponding segmentations are fitted with ellipses to obtain compact geometric parameters and encoding centroid, orientation, and axis lengths. Motion of the eye globe decomposes into global and local components. Global motion, arising from camera or patient movement, is estimated by annotating skin landmarks in the first frame and tracking them over time using pre-trained CoTracker. The resulting trajectories define a global transformation used to estimate the displacement of the eye globe centroid. Local motion corresponds to rotational movement of the eye globe and is derived from the centroid trajectory of the pupil mask, yielding rotational parameters. Tool kinematics are recovered from tool masks generated using SASVi and SAM2-based manual interactive annotation tool. From these masks, we extract geometric parameters like tool tip position, orientation, and articulation parameters such as bending angle and opening angle.

@article{sivakumar2026swomo,

title={SWoMo: Neuro-Symbolic World Model for Cataract Surgery Simulation},

author={Sivakumar, Ssharvien Kumar and Johnson, Akwele and Dhingra, Anirudh and Frisch, Yannik and Ghazaei, Ghazal and Mukhopadhyay, Anirban},

journal={arXiv preprint arXiv:xxxx.xxxxx},

year={2026}

}